In 2026, AI will increasingly be defined by where it runs. As intelligence is deployed across factories, retail environments, and remote operational sites, the focus will shift away from raw compute power toward where data is generated, how quickly decisions must be made, and how efficiently AI can be deployed at massive scale.

The Operational Reality Driving Edge AI

Over the next several years, enterprises have increasingly recognized that their most valuable data is created outside centralized environments, on factory floors, in stores, and across geographically distributed operations. This shift is driving new priorities for AI: real-time inference, lower power consumption, cost efficiency at scale, and secure deployment across tens of thousands of devices.

At the same time, edge AI is reshaping how work gets done. Frontline workers are gaining new capabilities through computer vision, small language models, and locally deployed intelligence that enable faster troubleshooting, higher-quality output, and more autonomous decision-making. These changes are forcing organizations to rethink not just their technology stacks, but also how they manage operations, data, and infrastructure at the edge.

Against this backdrop, Padraig Stapleton, SVP and Chief Product Officer at ZEDEDA, offers the following predictions on how these dynamics are expected to shape edge AI through 2026.

Prediction 1: The Edge Inference Wars Are Coming

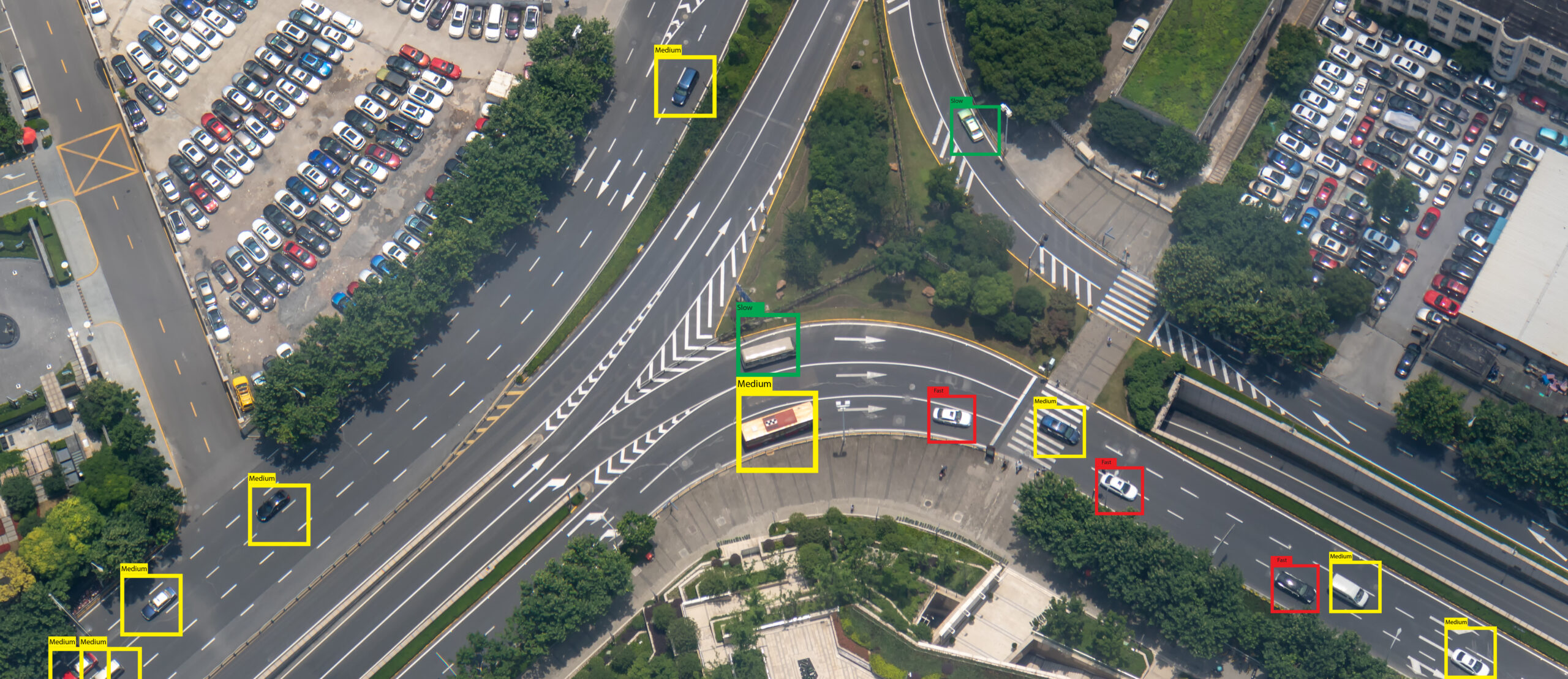

By late 2026, the real competitive battleground in AI shifts to edge inference. While the data center remains important, organizations are realizing that the data that matters most is generated at factories, retail locations, and remote sites, places where real-time decisions are critical.

At scale, edge deployments introduce very different constraints. When organizations are rolling out 20,000 devices, cost per unit and power consumption become decisive factors. These realities open the door for a broader ecosystem of silicon providers. NVIDIA, Qualcomm, AMD, and specialized chipmakers are positioned to compete aggressively in edge inference, carving up a market shaped less by peak performance and more by efficiency, flexibility, and scale.

Enterprises will need to operate across increasingly diverse hardware environments, making platform-level abstraction and orchestration essential.

Prediction 2: Frontline Workers Get AI Superpowers

By 2026, edge and physical AI will push decision-making autonomy closer to the frontline. Workers who have never had access to this level of intelligence will be supported by AI systems that operate directly at the point of work.

Collaborative robots will increasingly take on repetitive and physically demanding tasks such as material movement, assembly, and basic operations. At the same time, humans will focus on judgment-driven work—quality inspection, exception handling, and finishing tasks—augmented by computer vision and small language models running locally.

The challenge lies in delivering these capabilities securely and consistently across thousands of locations, often staffed by workers who are not IT experts. Companies that succeed in managing distributed edge AI environments will gain a significant advantage in industries like manufacturing, oil and gas, and automotive, where labor shortages continue to put pressure on operations.

Prediction 3: Small and Vison Language Models Break Through at the Edge

In 2026, small and vision language models will move beyond research environments and into practical, production-grade edge deployments. Rather than relying on constant cloud connectivity, these models will deliver immediate, localized value.

One clear example is embedding equipment manuals and operational documentation directly into small language models, allowing frontline workers to troubleshoot error codes or operational issues on the spot. Similarly, vision language models at the edge can support camera-based inspection and monitoring by processing visual data locally and pairing it with language-based guidance without relying on constant cloud connectivity. This approach delivers expertise where it’s needed, when it’s needed, without introducing latency or dependency on always-on connectivity.

This shift represents a move away from cloud-dependent generative AI toward practical, on-site intelligence that supports day-to-day operations and empowers workers with instant access to relevant knowledge.

Prediction 4: From Centralized Control to Edge Autonomy

By 2026, organizations will continue restructuring their operational models, moving from centralized decision-making at headquarters to distributed autonomy at edge locations.

Edge computing platforms will enable enterprises to store data locally at manufacturing plants, retail sites, and operational facilities. Local teams will have greater ability to manage data, deploy and upgrade applications, and optimize performance in real time, while still operating within enterprise-level governance and security frameworks.

This transition reflects the operational realities of scale and distribution. As edge environments grow, autonomy at the local level becomes a requirement, not a preference.

Prediction 5: IT and OT Convergence Becomes Standard Operating Procedure

The long-standing divide between IT and operational technology will continue to dissolve in 2026. Manufacturers are increasingly applying IT workflows, containerization, and modern management tools directly to factory-floor environments.

This convergence enables more consistent infrastructure strategies, bringing cybersecurity, automation, and lifecycle management into industrial operations in a unified way. As a result, organizations can move faster while maintaining the reliability and safety that operational environments demand.

Rather than operating as separate domains, IT and OT are becoming part of a single, coordinated operational model.

Looking Ahead

By 2026, edge AI will be firmly established as operational infrastructure. The focus will shift from experimentation to execution, deploying intelligence at scale, empowering frontline workers, and managing increasingly complex edge environments with consistency and control.

For organizations navigating this transition, success will depend on the ability to securely orchestrate diverse hardware, applications, and AI workloads across distributed environments, turning edge complexity into a strategic advantage.