When I talk to customers about the edge, I always try to get to where the rubber meets the road. Over the last several years, a lot of our conversations have centered on infrastructure: how to securely deploy applications at the edge, manage distributed devices, and orchestrate workloads across all the different hardware that shows up in the real world. That work was foundational, and it is exactly where ZEDEDA led: proving that you can manage and orchestrate tens of thousands of application instances in some of the most demanding environments on the planet.

But that is no longer the whole story. Increasingly, the question I hear is not “Can I manage my edge infrastructure?” It is “How do I take the power of AI and make it work where my business actually operates?” That shift, from simply standing up edge compute to actually running intelligence at the edge, is what this evolution is about.

Why Edge Intelligence Matters Now

Most enterprises no longer need convincing that AI is valuable. What they are wrestling with is how to move from ideas and pilots to day-to-day operations.

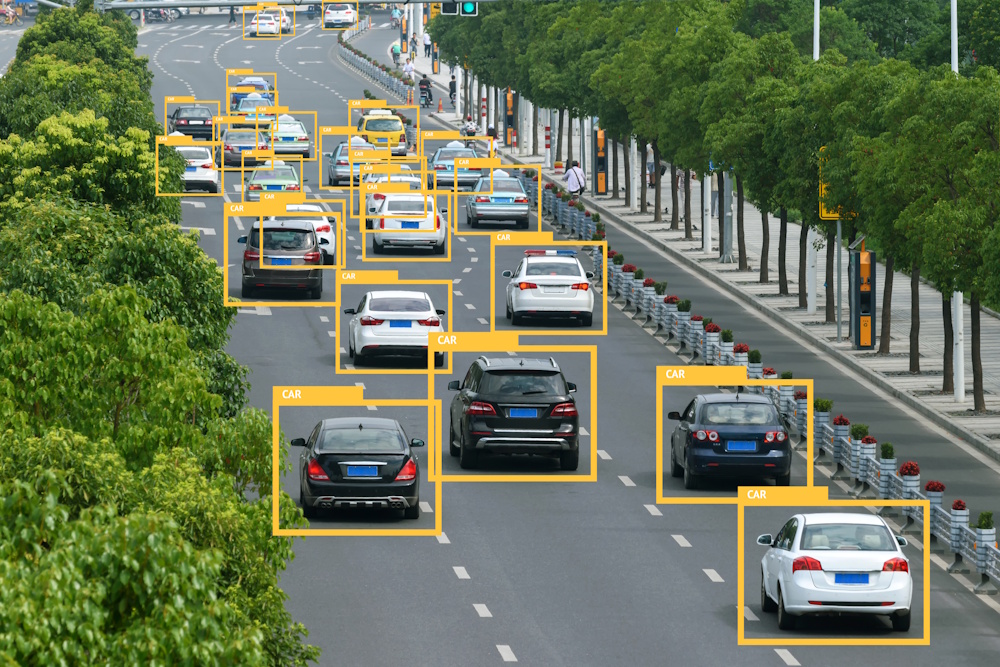

In automotive manufacturing, the conversation usually starts with quality and efficiency. As an example, large automakers are looking at deploying both traditional machine learning models and newer generative AI models across their manufacturing locations. Their goal is simple: use AI at the edge to catch issues sooner. That could mean inspecting welds in real time, so defects never turn into recalls six or 12 months down the road, or analyzing paint on the line, where shiny surfaces make defects hard to spot with the naked eye, so issues get sent back for rework before the car ever leaves the plant.

In retail, the pressure comes from razor-thin margins. Retailers want to run their stores more efficiently, from inventory management to shrink reduction. They are using edge AI to visually inspect shelves and understand what is happening in real time, to monitor self-checkout lanes and ensure items are scanned and paid for, and to tap RFID-tagged merchandise so they can see what is selling and what may be walking out the door.

In oil and gas, edge intelligence has become central to how operators run complex, distributed assets. They collect data at the well, run inference locally, and adjust how they extract based on whether a well is just coming online or in a more mature phase. They analyze flare stacks to understand gas composition and environmental impact. Along pipelines, they use sensors to capture acoustic anomalies and run on-device inference to detect leaks quickly enough to shut upstream valves in milliseconds instead of seconds, limiting damage and downtime.

None of this is theoretical. These are real use cases I hear when I ask customers, “What would you do if you could bring the full power of AI right to the edge of your operations?”

The Gap Between Cloud AI and the Edge

The sticking point is usually not the model itself; it is everything around it.

In the cloud, teams can train or fine-tune models in environments like AWS SageMaker, Google Vertex, Snowflake, or Databricks with essentially unlimited compute and storage. But getting that model out of a Jupyter notebook and into a factory, a store, an oil platform, or a remote pipeline segment is a different challenge entirely. At that point, you’re dealing with constrained hardware, intermittent connectivity, security and safety requirements, regulatory constraints, and the need to operate at scale across hundreds or thousands of sites.

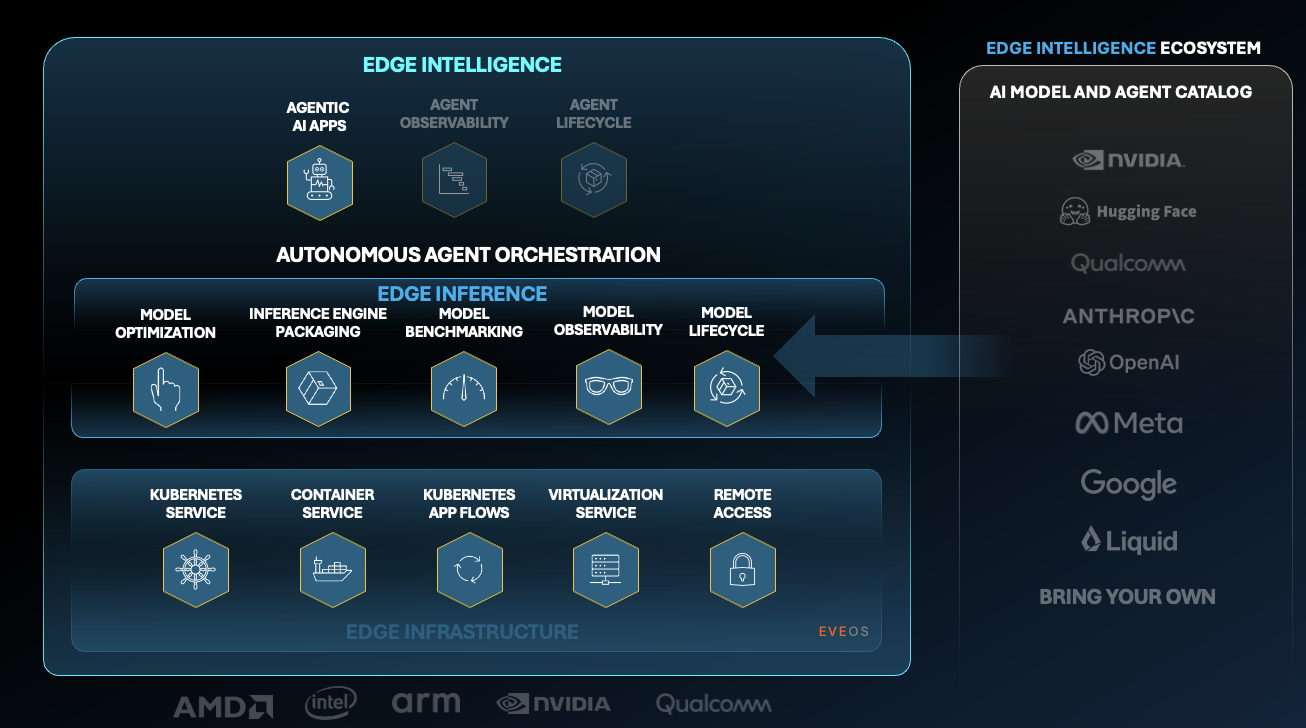

That is the operational gap we built ZEDEDA’s Edge Intelligence Platform to close. ZEDEDA has spent nearly a decade focused on the distributed and physical edge, delivering an open, secure, hardware-agnostic orchestration layer that already manages tens of thousands of edge nodes and applications. With the Edge Intelligence Platform, we are extending that foundation so customers can orchestrate the entire edge AI lifecycle in a single control plane, from creating and deploying models and defining agent behavior to optimizing inference and governing distributed operations.

ZEDEDA Edge Intelligence Platform

Our recent Edge AI Survey underscores why this matters. Forty-seven percent of enterprises say they now use hybrid cloud–edge architectures, and 41% say managing AI workloads across distributed environments is challenging. Enterprises are already trying to push AI closer to where work happens; what they lack is a practical, repeatable way to do it.

Making AI Deployment at the Edge Practical

With this launch, the emphasis is very much on making it easier to deploy and operate AI at the edge, not just to stand up infrastructure.

We have added a lot of tooling into the platform so that, if you are a customer with a model you have trained in the cloud, you can bring it into the real world with far less friction. That includes automated deployment workflows, real hardware benchmarking so you can validate performance on devices like NVIDIA Jetson or Intel-based systems before going to production, and enterprise-grade governance with approval workflows, audit trails, and one-click rollbacks.

We are also leaning into new patterns like agentic AI and advanced vision applications. Working with partners such as NVIDIA, we are delivering templates and blueprints that show customers how to build real-world use cases, like PPE detection on a manufacturing line or loss-prevention in a store, and how to change rules and personas without constantly redeploying models and applications.

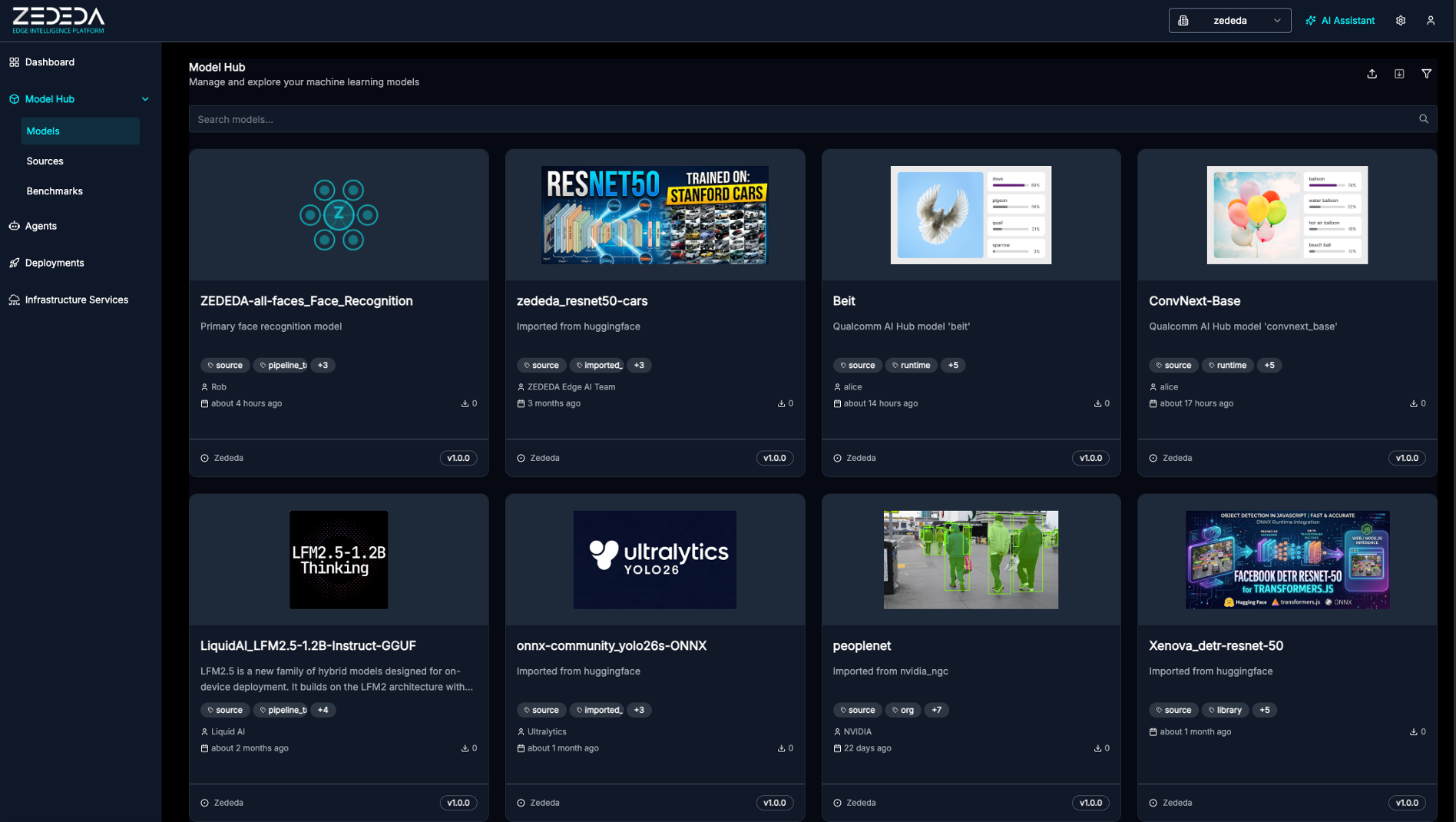

Model Hub within ZEDEDA Edge Intelligence Platform

On the model side, our Marketplace is the model registries customers already rely on. Because of the open nature of the platform, you can pull models from public sources such as Hugging Face, connect to services like Snowflake, Databricks, or SageMaker, or point to your own internal model registry sitting inside your private network. That is especially important in industries where models are treated as core intellectual property and never leave the corporate perimeter.

Matching Offerings to Customer Maturity

Not every customer is starting from the same place in their edge AI journey. Some are experimenting; some have sophisticated models and clear use cases; many are somewhere in between. ZEDEDA’s offerings are designed to reflect that.

Here is how I think about it when I talk with customers:

| ZEDEDA Offering | Best For | Key Benefits |

|---|---|---|

| Edge Intelligence Appliances | Teams just getting started with edge AI | Turnkey stack: validated hardware, runtime, models, and basic vertical apps so you can “kick the tires” quickly in your own environment. |

| Edge Intelligence Platform | Teams with their own models and hardware strategy | Bring-your-own-model and bring-your-own-device orchestration, taking models from cloud training to secure, scalable edge deployment. |

| Edge Intelligence Labs | Teams that need hands-on help | Embedded experts who co-develop, optimize, and tune deployments for your specific environment, from pilot to production. |

The important part is that you do not have to fit into a one-size-fits-all model. You can start with Appliances to validate value, lean on Labs when you need additional expertise, and standardize long-term on the Edge Intelligence Platform as you scale.

What This Means for Existing Customers

For existing ZEDEDA customers, it’s important to emphasize that this is a continuation of our strategy, not a reset. Everything we are announcing is built on the same orchestration foundation you already use. The Edge Intelligence Platform is fully backward-compatible; it adds capabilities rather than forcing you to re-architect what you have in the field.

In practice, that means you can keep running your current workloads while layering in new edge AI capabilities when you’re ready, using the same secure, open, hardware-agnostic platform you trust today.

Bringing AI Out of Theory and Into the Physical World

We have reached an inflection point. AI is no longer something that lives only in centralized data centers or cloud regions; enterprises increasingly need it to operate where data is generated and where physical work happens. From my perspective, the future of AI will be defined less by what you can model in the cloud and more by what you can run safely, efficiently, and at scale at the edge.

That is why ZEDEDA is focused on edge intelligence. Our job is to bridge the gap between the advances we see in AI, whether that is traditional machine learning or the latest generative models, and the reality of running a factory, a store, a pipeline, or any other distributed operation. If we can make it straightforward for customers to deploy, monitor, and update AI models where their business actually operates, then we are doing our job.

And based on the conversations I’m having every week, that is exactly where enterprises want to go next.

Do you want to be among the first to experience ZEDEDA Edge Intelligence Platform? Sign up now to join the list!